Washington: On March 6, government experts from around the globe will once again gather in Geneva to debate the ethics and legality of autonomous weapons.

The crucial question for arms controllers: What’s the greatest danger from militarized AI and other autonomous systems?

Many peace activists and neutral nations focus on out-of-control killing machines, like the Terminators of pop culture or, more plausibly, the swarming assassination drones of the mockumentary Slaughterbots. But others, including US officials and experts, often focus on something subtler, like an intelligence analysis algorithm that mistakes civilians for terrorists, a hospital for a military base, or a scientific rocket for a nuclear first strike — even if it’s still a human pulling the trigger.

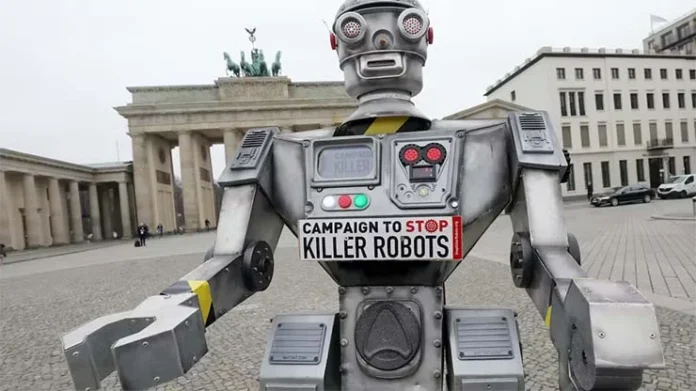

A growing movement hopes the United Nations Group of Government Experts meeting in Geneva will help lay the groundwork for a binding legal ban on at least some kinds of officials call “lethal autonomous weapons systems” and what activists call “killer robots”— however they end up being defined, a question that’s bedevilled the Geneva negotiators for nine years.

Just last week, at a conference in Costa Rica, 33 American nations, from giant Brazil to tiny Trinidad, declared “new prohibitions and regulations… are urgently needed,” in the form of “international legally binding instrument” like those already banning land mines and cluster bombs.

But the US is expected to push back with a very different vision, calling not for new binding treaties but for voluntary self-restraint — and this year, unlike at past Geneva meetings, they’ll have a detailed outline of the kinds of measures they mean, laid out by Under Secretary of State Bonnie Jenkins at a February conference in the Hague.

In essence, the US fears a formal treaty would hobble legitimate, even life-saving applications of a rapidly developing military technology for those countries that actually comply, while ignoring the insidious dangers of algorithms gone wrong in applications outside automated weapons. Instead, the US declaration at the Hague laid out non-binding “best practices [and] principles,” focused on artificial intelligence and based on Pentagon policies, to guide all military uses of AI.

“[Trying] to negotiate a legally binding instrument with such fundamental divergences in our understandings and purposes… is likely to fail,” the State Department spokesperson continued. So if the GGE in Geneva wants to make real progress, they argued, it should focus on “clarifying” how autonomous weapons are already restricted by existing international law, which has long banned indiscriminate killing and deliberate strikes on civilians, and which requires clear accountability on the part of human commanders.